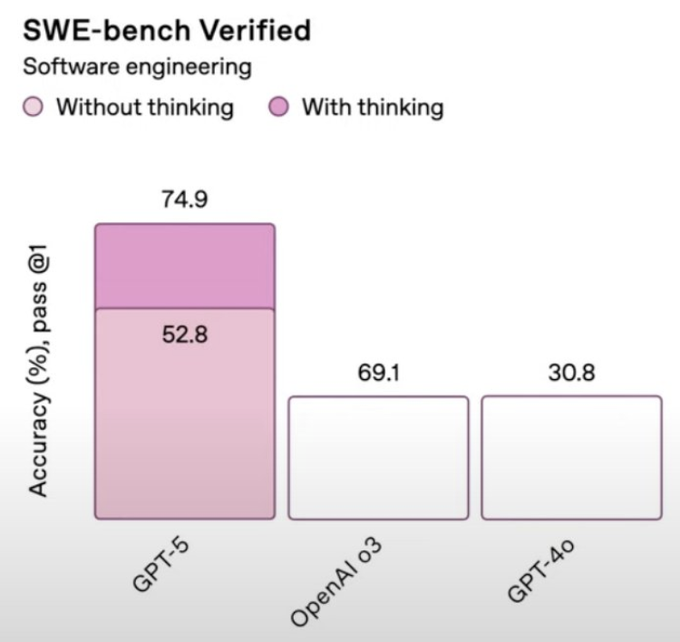

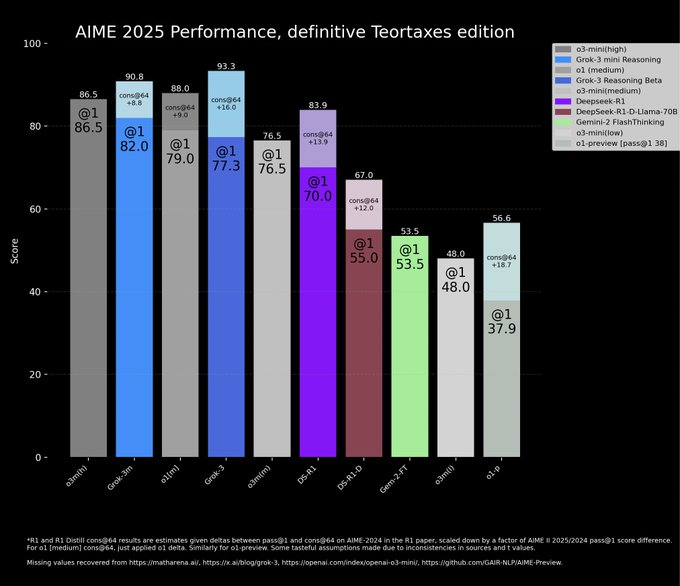

When xAI announced Grok 3 in February 2025, they prominently featured its AIME 2025 math reasoning benchmark score. The chart compared Grok 3 against multiple competitors, but conspicuously omitted OpenAI's o3-mini cons@64 configuration—which had scored higher than Grok 3's best attempt.

Chart source: xAI Grok 3 Announcement

The Violation

- 1

Chart showed Grok 3's @1 score without including o3-mini's cons@64 score from the same benchmark

- 2

o3-mini cons@64 score (publicly available) exceeded Grok 3's performance

- 3

Included other competitors' scores but selectively excluded the one that would have topped Grok 3

- 4

No acknowledgment of the omission or explanation for excluding that configuration

Why This Matters

Selective data presentation transforms a middle-of-the-pack result into an apparent victory. When TechCrunch investigated with the headline 'Did xAI lie about Grok 3's benchmarks?', it highlighted how omission-based charting undermines trust in AI company claims.

Community Verdict

"xAI's Grok 3 chart is missing the o3-mini cons@64 score that actually beat Grok 3. This isn't an oversight—it's intentional chart manipulation."

"When you carefully select which competitor scores to show and which to hide, you're not benchmarking—you're marketing."

The Defense

Imagined company response

"xAI has not publicly addressed the omission. Some defenders argued that different sampling configurations aren't directly comparable, though the benchmark framework treats them as valid comparison points."

Related Crimes

Spot a similar crime?

Help us document chart crimes in the wild